The Monthly Metric: Risk Management Score

The 2015 white paper that introduced Institute for Supply Management®’s (ISM®) Mastery Model describes risk, one of the model’s 16 core competencies, as something that should be “systematically identified, analyzed and assessed throughout the global supply chain in terms of probability, with options generated to reduce or mitigate” it.

In the two years that Inside Supply Management® has presented The Monthly Metric, one of the themes has been the perceived paucity of procurement analytics that identify, analyze and assess risk. Recent natural disasters, geopolitical turbulence and cyberattacks have raised demand for KPIs that measure an organization’s ability to respond to disruptions. A metric that can help, however, is one that’s been around for a while.

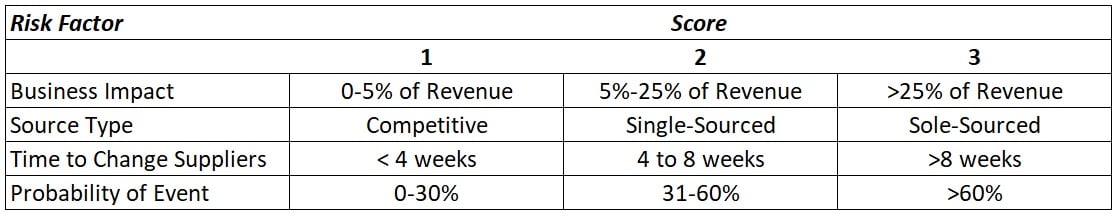

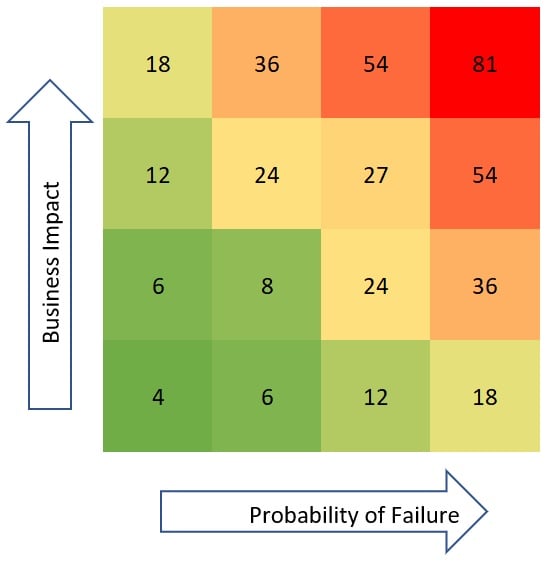

There are multiple versions of risk management score; ISM’s was put together by Lane Burkitt, ISM Global Director, Learning and Development. The score is compiled by evaluating four risk factors on a scale of 1-3, multiplying those scores and finding the result on a “heat map” that measures the probability of a disruption and its potential impact on the business.

The map helps organizations determine their biggest risk hot spots by supplier and location — and start generating options to avoid or mitigate a disruption, Burkitt says: “If you have a plan, you can execute it. You can look at it and know where you are potentially exposed. It might be in a region where there’s not a good business case for (preemptively) pulling out, but if something happens, you know what you’re going to do. You can shift production, develop a new supplier or accelerate development of one, and do what else needs to be done.”

The Value of Measuring Probability

The risk factors are (1) business impact of a supply interruption, (2) source type — competitive, single-sourced or sole-sourced, (3) time to change suppliers and (4) probability of event. Other risk management score models do not account for event probability because some analysts feel it’s a subjective measure that can be used to manipulate the score, but Burkitt says evaluating probability is necessary to get the best picture.

“Probability requires looking at the market and geopolitical conditions,” Burkitt says. “It’s not easy to quantify. You have to do a lot of market analysis. … The reason you need to do probability is because all risks are not equal. If you’re doing a chart where you’re looking at risk, one of the (factors) needs to be probability, because if not, only the suppliers with the highest spend will get your attention, not the highest risk.”

Also, probability and revenue impact are intertwined. A disruption involving a 3-cent casting can have a significant revenue impact if it goes in every product a company makes, but only if its available from one or two suppliers. If a company can get the casting from dozens of suppliers, the event probably is low. “So, even though it’s an important piece of the equation, probability is not factored into some other models,” Burkitt says.

After taking each of the four factors and assigning a score of 1-3 based on the table above, the scores are multiplied to determine the risk management score. For example, a company with (1) a disruption revenue impact of 15 percent on (2) a sole-sourced part, (3) can change suppliers in less than four weeks and has (4) an event probability of 25 percent has an overall score of 6. Based on the heat map below, that’s a favorable result.

Burkitt says that a detailed risk-management plan is not required for scores in the green squares. For the yellow squares, it’s up to a company’s discretion. For the orange and red squares, a management plan is essential.

Taking the Metric Further

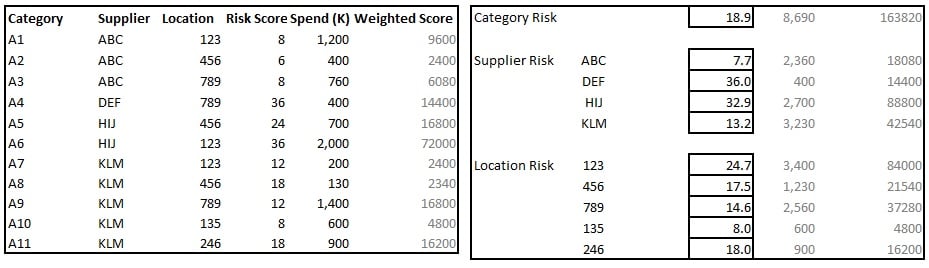

Risk management score can be most useful when aggregated by category, supplier and location, as shown by the examples in the charts below. And when weighted based on spend figures, the scores can help a company determine if it is too invested in one location — a potentially valuable insight, given that natural disasters are impossible to predict. In the example below, the average risk score between supplier HIJ’s two locations is 30, but because most of the spend is at the higher-risk location, the weighted score is 32.9.

In such charts, some location risks are self-evident — for example, a plant in Venezuela that is vulnerable to a geopolitical-related disruption. Others can be more nuanced, Burkitt says, like a location with a stamping tool, and there’s no cost benefit to the company developing another tool for a different location. “Relying on that one tool increases the risk,” Burkitt says. As a result, he adds, a company must accept the risk, or conclude that it makes sense — even though the cost benefit isn’t there — to develop a new stamping tool for a new location.

The risk management score arms practitioners with the information to communicate risk assessments to a company’s senior leadership — to evaluate “the probability and consequences of the risk and use the data to prioritize risk-elimination activity,” as the ISM Mastery Model® white paper states of professionals who have achieved mastery in the risk core competency. However, Burkitt says that assessments should be based on the level of risk a company — not an individual practitioner — can stomach.

“Every company has a different risk appetite,” he says. “And that risk appetite needs to be part of how you decide what's high risk and what's low risk. I can’t look at this based on my risk appetite. My risk appetite may be much higher or much lower than the company's. I should use the same definitions (the company) uses — what’s defined as the risk appetite for the organization. It's still going to be somewhat subjective, but it's more likely to be consistent across locations.”

To suggest a metric to be covered in the future, leave a comment on this page or email me at dzeiger@instituteforsupplymanagement.org.